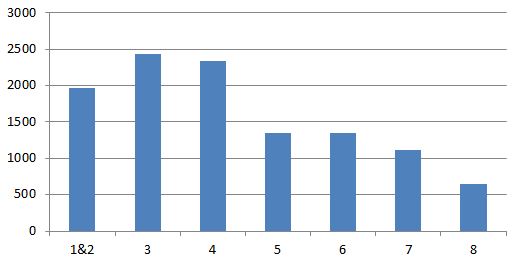

This is a pretty simple metric to look at but might be quite revealing to your organisation. I’ve matched the sender of an email to their rank derived from the corporate directory. The rank is their position from the top of the directory e.g. A manages B manages C – if A is at the top of the directory then A=1, B=2 and C=3; this approximates their grade and roughly those ranked 1-2 are senior executives, 3-4 are middle management and 5+ get to do all the work. The chart shows the average number of emails sent at each rank over a number of months. Ranks 1 and 2 are combined because rank 1 is a very small sample.

Category Archives: email

Combining Data to Weight Social Connections in an Organisation

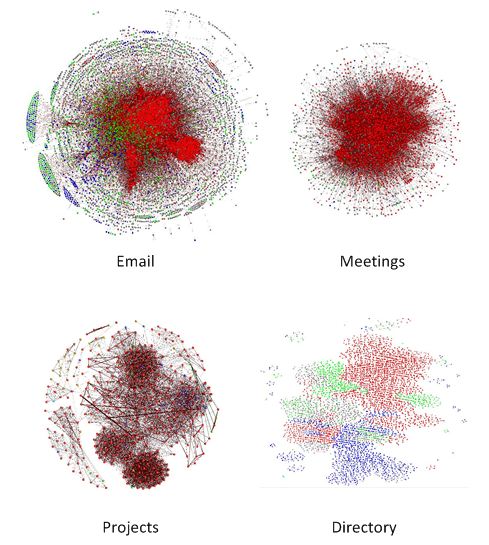

In the above diagram red nodes are from the division of the organisation under study; green and blue are from two other divisions and grey nodes are uncategorised or central functions.

I’ve previously described determining a ‘score’ for social connections taking data from email, meetings, directory and timesheets. The question is how to combine them to produce as complete a picture as possible from the data at hand. I’m fairly sure the best answer is not to simply add the scores together but I’ve not found any guidance that would help do anything except that so that’s exactly what I have done…add them up. To summarise the score (or more correctly weight) given to each edge is made from:

- 1 point for each email exchanged (where there are a maximum of 10 recipients)

- 1 point for each minute in a one-to-one meeting, reducing rapidly as the number of attendees in the meeting increases

- 300 points for being in a manages/managed by relationship

- 1 point for every hour spent on a project divided by the number of people on the project

I’ve pulled this data together over the following periods:

- Email: 6 months

- Meetings: 2 years, 3 months

- Corporate Directory: 6 months (but this is very slow to change so probably reflects the vast majority of the last 2 years)

- Projects: 1 year

The coverage of the data also varies:

- Email: for the core of the organisation being studied this is excellent as the data comes from Exchange Server Logs, for the periphery there is limited coverage as only emails being exchanged with the core are captured

- Meetings: probably less than 50% as not all rooms are visible and not all meetings are booked in rooms; also teleconference information is not captured

- Corporate Directory: very good, 90%+ but data is limited to corporate hierarchy

- Timesheets: good but the system is not used universally as not everyone works on projects.

Some Observations using this approach:

- Email dominates the structure of the network, the others add very little for those in the core; however for those outside the core the others provide additional insight into the structure.

- There is overlap in these sources, for example we expect a manger will share emails with their reports and that people on a project will have meetings together but, as the coverage of each source is not compete, this is a small price to pay for seeing the whole network.

Despite the rather simplistic approach the results appear to work quite well but I’d love to hear from anyone who has implemented, or read about, a smarter way to combine these types of SNA sources.

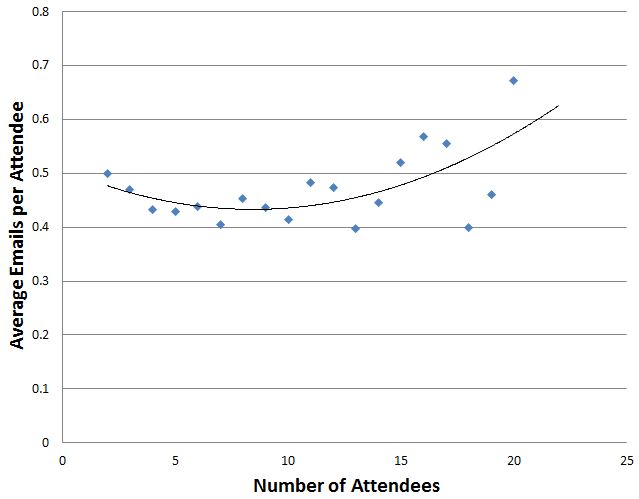

Effectiveness of Large Meetings

The previous discussion of Dunbar’s number suggests larger meetings will be less effective. Is there any data to support this? The following chart shows emails sent during meetings:

Seems to suggest the larger the meeting the less attention people are paying but not a particularly remarkable result. I expect looking at instant message traffic during meetings would be more revealing.

Side Effects: Making Friends with your Exchange Server Administrator

I’m no Exchange Server expert, maybe there are some better built-in or downloadable

tools for doing what I describe here, but if you’ve loaded emails into a

relational database, as I’ve described previously, the following is extremely

simple. In arranging to get log files from the Exchange server I asked the

administrator if there was anything they wanted to know about that the logs

might reveal and, indeed there were: it turned out they wanted to know who was

sending the most email as storing it all was becoming more and more of a

problem. After trying a few different ways of reporting on the logs we settled

on the following: every time new logs were taken (about every 2 weeks) two

queries are run: the first extracts the top 100 sender-to-recipient traffic by

email count and the second extracts the same but by total message size (which

is why I capture it). Quite often the culprits were system accounts including

one that went a bit mad and sent 300,000 emails in 72 hours! Using these

reports the Exchange administrator has managed to work with development teams

to reduce the number of mails being generated by applications that it seemed no

one was reading.

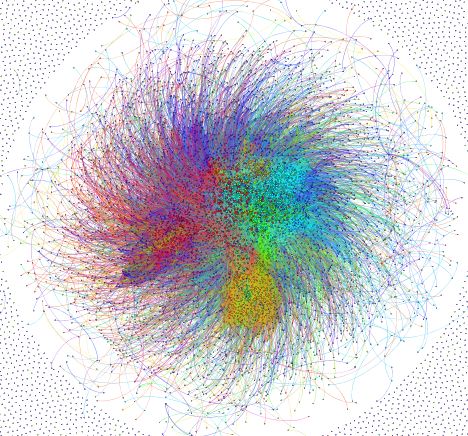

Show us your network

I’ve been taking a look at how to get graphs displayed in a browser. Here is the data I’ve been describing in previous posts; I can’t go into much detail about the meaning of this graph except that each node is an email account.

Email Hints & Tips

When working with email there are a few things that can trip you up, here are some tips for avoiding them:

- Always turn email addresses into a consistent case; I prefer lower but the choice is yours. Oh and get rid of any leading or training spaces, you shouldn’t get any from the Exchange message tracking logs but make sure by trimming anyway.

- Use an integer ID as primary NOT the email address; email addresses can change over time and there will often be duplicates; using a key other than the address makes it easier to merge addresses when duplicates are detected (through an email aliases table)

- Ignoring broadcast emails: sometimes you may see an email sent from the CEO to everyone in the organisation – is this really indicative of a relationship, probably not. In fact any emails sent to more than a small group probably don’t give much indication of a social tie. There are a couple of options:

- Ignore emails sent to more than n people; what in is up to you, I’d say around 10

- Use a formula to exponentially reduce the social network significance assigned to an email as the number of recipients increases. I’ll say more about this approach when I discuss some other sources of data.

- Ignoring system/technical accounts: you might see emails sent from non-personal accounts, e.g. “[email protected]” and these should probably be ignored as they are usually just a broadcast of information revealing no social ties. How do you spot them? If you are lucky then they may not conform to the same pattern as personal emails (e.g. “firstname.surname@domain .com” versus “[email protected]”) or you’ll have to construct a list; in my experience both were used, the pattern match caught most but there were a number of exceptions that had to go into a list, you just have to keep an eye out for them.

The Exchange server logs contain a message size. I have not yet found any use for this in understanding the social network but it’s useful to have when making friends with the Exchange server administrator, see my next article!

Enhancing Email with Directory Information

If you want to perform analysis comparing sub-sets of a graph derived from your email logs you will need to bring in some additional data, for example department. You might be lucky in that the organisations addressing scheme provides some clues e.g. ‘[email protected]’ or ‘[email protected]’ but if not you will have to find another source. Now you might have an efficient HR department who could provide an extract of employees with department, role and any other data they don’t mind you having. Or they might not be so helpful. If HR won’t help there are some other sources: the directory service (e.g. Microsoft Active Directory) or maybe an intranet site that acts as a phonebook and/or organisational chart. Which one you select will depend on content and accuracy. In my case the intranet site provided both the most accurate and richest source of data. Each intranet is going to be different so maybe the following is not going to work for yours but I hope the approach provides some inspiration.

The intranet I have worked with is composed of two page types:

- A page describing the employee with phone numbers, job role, location, department and also some free-text fields allowing people to input experience, skills, what they work on and languages spoken.

- A Hierarchy page: given an employee it displays their manager and direct reports.

Luckily each page type could be bought up by knowing the employee and manager ID and constructing a fairly simple url.

I thought it would be useful to understand where an employee sits in the overall structure so I wrote a program to traverse the directory top-down. Given a known starting point (the ID of the most senior employee and their manager, effectively blank) the algorithm worked like this:

- Find all the direct reports of the current employee

- Recursively call step 1 for each direct report (if any) and keep a count of how many there are

- Get the directory information for the current employee

- Store the directory information and the count of direct reports plus any other derived information (e.g. the employee’s level in the hierarchy and a list of all the managers above them)

Now unfortunately this does not find all the employees we have email records for; this turned out to be because the intranet directory is incomplete so as a second exercise:

- Find all the employees for which there is an email record but no directory entry recorded from the first phase

- Query the intranet directory by email address (fortunately this was a feature of the one I was using)

- Store the directory information

This second step could be used to populate the whole list but it does not provide such rich hierarchical information.

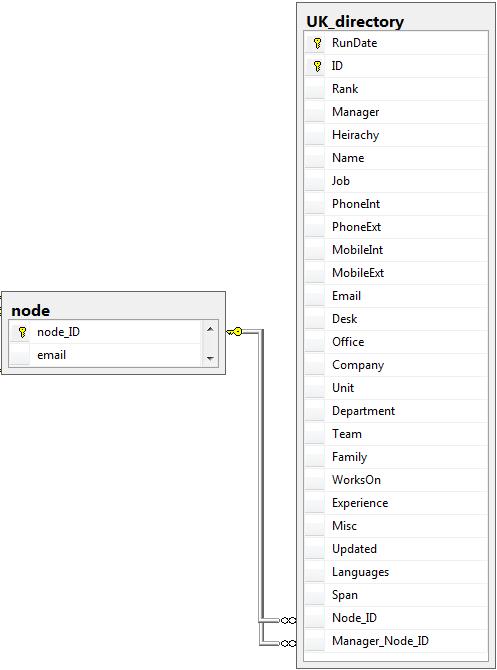

The directory information is best retained in its own table. This is because it changes over time and should be obtained periodically (e.g. monthly). However a refresh of the directory data SHOULD NOT overwrite, instead add a field that contains the date the data was fetched. This makes it possible to use directory data that corresponds to the period of data being analysed or to still derive a tie between people: if A used to manage B, even if they no longer do.

Example directory table. Note: RunDate is the date the directory was traversed (there will be multiple runs in 1 table); ID and manager are internal IDs for the intranet directory; rank is the position in the hierarchy (0 highest); Span is the number of direct reports.

Dealing with Sensitive Data (e.g. email Message Subject)

In previous entries about analysis email logs I mentioned the message subject which can, optionally, be included. Now this can be considered sensitive information and how you deal with this will depend on the organisation concerned. The organisation I’ve previously described decided to allow the message subject to be extracted but not stored as-is; instead it was agreed that the message subject would be hashed (a one-way encryption) and then stored. This is useful because it allows conversations to be tracked so that metrics like the average response time can be collected. There are a couple of other useful things to make the best of this approach:

- Before doing anything with the message subject turn the whole string into consistent case (upper or lower, your choice) otherwise “Hello” and “hello” give different hash values.

- Strip of the subject prefix (“RE:”, “FW:”) and do this repeatedly until none are left. Store the outermost prefix as-is (no hashing) and then hash and store the remainder of the message subject. In Social (Organisational) Network Analysis using email – Practical Architecture Part 1 the email table contains the fields ‘subject_prefix’ and ‘subject_hash’ – this is what these fields store.

- Base64 encode the hashed value otherwise you’ll run into trouble with escape characters.

Email analysis on a global scale

saw this on LinkedIn, it shows the flow of email between countries, see the link below for a further explanation

could this be applied to communities within an organisation?

Social (Organisational) Network Analysis using email – Practical Architecture Part 2

Graph analysis relies on getting data into Nodes (sometimes called Vertices) and Edges. If you have, as I suggested, first built a relational database then a second extract-transform-load step is necessary to get the data into the graph structure. I’ll assume the simplest scenario to describe these steps, which is simply to take all the data. Extract is fairly simple; there are two queries that can be run against the relational database: the first to get the nodes is just a straightforward select against the nodes the second query is to get the edges. The query to get the edges is not so simple and depends how you want to analyse the data; I’ll assume almost the simplest approach: you need to join the email table to the recipients table, select the Sender ID , the Recipient ID and a count(*) this is then grouped by the Sender ID and Recipient ID. This gives an edge for each sender-receiver plus a count of the number of emails; note this is directional, A sends B x emails and B sends A y emails. The simplest possible query could have omitted the count(*) but this is very useful as it gives an indication of the strength of the connection between two nodes (often called the Edge weight). The Transform step could be omitted if the desired analysis can be performed with the nodes and directed edges, however this is not always what’s wanted. If you want to understand the strength of a connection between nodes but don’t care about direction then a transform may be necessary. Now this can be achieved in other ways but it’s useful to understand how to do this in an intermediary because this is useful when combining data from more than one source. Now bear in mind this works for 10,000 nodes: I like to use a hash table (C#) to build the edges. For each edge first re-order the nodes by ID and then create a key by combining the IDs then test the hash table to see if this key exists; if the key is not found create a new entry using the key and store the count as the associated value; if the key already existed retrieve the associated value, add on the count and save it back. The hash table will now contain undirected Edges and the associated combined message count; you can see it would be easy to add in additional counts from other sources to create a combined weight for the relationship. The Load step is going to vary depending on the target but it’s basically taking the nodes as selected from the relational table and the edges from the hash table and getting them into the target. I’ll briefly explore three targets:

- Node XL (remember good for small sets of data, < 1000): if you are using .NET then it’s very simple to drop the NodeXL control onto a Windows form and get a reference to the underlying object model. Then whip through the nodes (or vertices as NodeXL calls them) and add them followed by the Edges; for each Edge you need to get the Node IDs which just requires a quick lookup directly from the NodeXL model.

- Gephi (can handle larger sets, easily 10,000 nodes): my favourite approach is to write out a .GEXF file following very much the method above but there is no need to look-up any internal references for the Edges, you just need the two Node IDs.

- Neo4j (can handle larger sets, easily 10,000 nodes): if you are writing in Java then I believe it’s possible to directly manipulate the object model (very much like you can with .NET and NodeXL) but I used the REST API which is definitely fast enough when running on the same computer. There are some .NET libraries available the wrap the REST API but the level of maturity varies. I have found a problem specific to Neo4j, which is it wants to create its own node IDs which can’t be changed. When you create a new Node Neo4j returns the ID which you will need when adding the Edges, so I suggest recording these in a hash-table to map them to the Node ID from the relational database otherwise you will need to query Neo4j to get the IDs every time you add an Edge.

I hope this has given an overview of how to get email logs into a graph-aware database or application by using and ETL step to first store an intermediate relational database and a second ETL step to move from a relational to graph structure. Keep an eye out for future postings that I intend to drill into some of the detail and expansion of the architecture described here.

Follow

Follow