It’s been a while since I was collecting data for some organisational SNA but I recently had cause to look back at this work which describes assigning weights to each data source. Many people have since left the organisation studied which is, in itself, easy to know about from org charts or HR records but what about people who in the past shared a lot of email or IM but no longer do? Should recent data be given more weight than historic data? I’ve searched for some guidance but not found any, does anyone in this forum have any advice?

Category Archives: Social Network Analysis

SNA Quick Win: Outsourcing Organisational Design

When entering outsourcing arrangements many organisations like to bring in the role of “Relationship Manger” which is supposed to act as the interface, or at least an initial broker, between the two organisations. Each party tends to have these roles and the amount of informal communication that does not travel via these roles varies depending on the levels of trust and formality. The question is how many people should be in these roles and between which groups in the two organisations should they be placed?

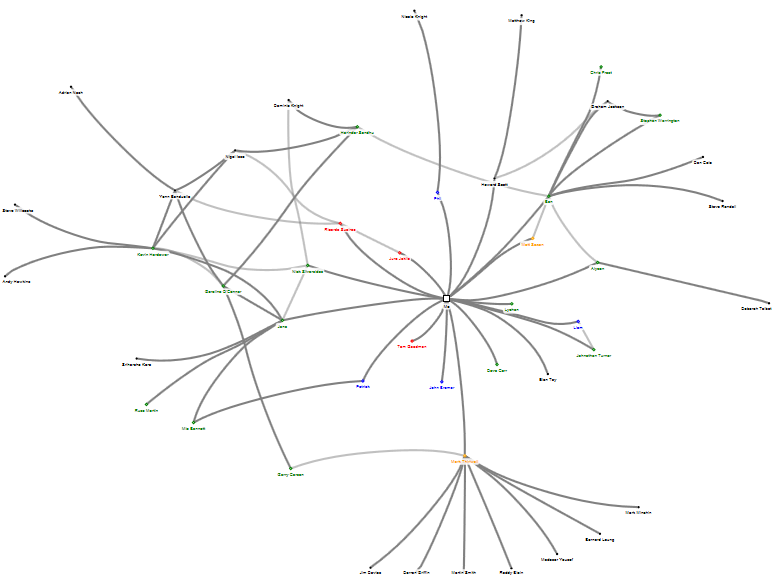

Looking at the original organisation, prior to outsourcing, some simple SNA tools can help: by measuring the communication between the two groups (retained and outsource target) it can be seen who the key people are bridging the groups and the volume of that communication. Using a tool, like Gephi, and running a layout such as Fruchterman-Reingold this can be visualised as shown below:

Note in the centre there are some individuals with strong ties between the two groups, this would be a prime place to consider placing relationship managers. There are also another couple of interesting observations: (1) there are a lot of less strong relationships between individual in the two groups which, taken individually, might not seem significant but when added up are significant and must be considered; (2) some individuals appear to be in the wrong group (e.g. the red dots amongst the green dots) and their allocation to the ‘retained’ or ‘outsource’ group should be reconsidered.

Organisational Network Analysis: Actionable Insight during Restructuring

I have previously described how influence can be measured from data organisations regularly collect. Take a look again at two people: A and B (B reports to A):

You can see how B is starting to diverge from A and loose influence, so what is the underlying cause? The organisation has been undergoing restructuring and reducing headcount which has impacted A and B to different extents. Look at the graphs of their strongest ties, in the diagrams below, the blue nodes are current employees and the red nodes are employees who have, at this point in time, departed the organisation:

A:

B:

Notice how there are more red nodes central to B’s social network when compared to A’s. B may be feeling disconnected form the organisation because so many people they knew well have left but A is just not feeling this to the same extent so might not understand why B is exhibiting reduced levels of engagement with the organisation. I hope you can see that organisational network analysis can provide a way to quickly measure the impact to individuals, when re-organising, in order that action can be taken to support them whilst they rebuild their social networks.

Unusual Sources of Organisational Network Analysis Data

In the organisation I’ve studied email is the undisputed winner at exposing the organisational network. I’ve previously described a number of other sources of data for organisational network analysis but are there others?

Some of the more obvious ones are telephone records, both desk and mobile phones. These would be relatively simple to analyse, provided they are easily matched to employees, and I expect the results would be quite good.

But there are some others I’ve noticed:

- Conference call dialled-in numbers: captures employees joining meetings by phone, there would be some overlap with meeting room bookings but as these are going to be at the same time de-duplication is possible.

- Entry control systems: people arriving or leaving a particular building or floor at the same time are likely to have shared some conversation.

- Train, Air and Hotel bookings: employees making the same trips are fairly likely to be spending time together discussing both business and other interests and maybe even having a drink in the hotel bar!

- Electronic payments for vending machines and catering: employees who have used a particular electronic payment point at the same time might have had a discussion over a coffee.

- Car pools: if the organisation manages a car pool scheme it is fairly certain those sharing a car will get to know each other.

I’ve not had the opportunity to explore any of these data sets so I’d like to hear from anyone who has. But is this going too far, your thoughts?

Identifying relevant tweeters: keywords and stemming

I’ve previously described why an organisation would want to analyse Twitter and described my initial architecture for achieving a targeted analysis. The targeting relies on identifying tweeters who are relevant to the organisation’s aims in order to keep the size of the network manageable and remove the ‘noise’ of irrelevant tweeters. To date identifying ‘relevant’ tweeters has relied on scoring each tweet against a list of keywords; this is somewhat crude and I’ve been looking at simple ways to improve it. I’ve been aware of stemming for a while and have have now found a C# implementation, along with some others. There is a good explanation of stemming on Wikipedia so I won’t try and repeat that. To apply it first run all the keywords through the stemming algorithm and then run all the words in the tweet through the algorithm before comparing. This should produce more matches, or eliminates the need to try and capture all the variations (plurals, tenses, etc.) of the word you are interested in. It’s definitely not perfect and I am aware there are more sophisticated approaches which take context into account, however think it will be better than just keywords. I’ve not tried comparing results against a simple keyword list, before I do does anyone have any further guidance?

Mapping the Graph of Retweets

In the pre-print book Twitter Data Analytics section 5.1.1.1 ‘Retweet Network Fallacy’ describes the problem that with a retweet you can only tell who the original tweeter is and not the network through which it propagated.

I’ve been doing some work on using retweets to help understand the strength of connections in a social network, you can see some early results here and this is an issue. I believe this can, at least partially, be resolved by using follower data.

I’ve previously discussed Twitter Followers and I don’t think this concept takes too much explanation. Here is a simple example of a followers network: Peter is followed by John who is, in turn, followed by Alice and Bob, etc.

Now let’s look at the problem described in Twitter Data Analytics, retweets: in this simple example Peter is retweeted by John who is, in turn retweeted by Alice and Bob

But if you look purely at retweet data returned by the Twitter API you will see this:

It appears that John, Alice and Bob retweeted Peter; the structure of the network is lost.

Let’s look at this again with the retweets overlaid on the followers network:

How does this help? Well firstly load the follower network into a graph database and then take a look at each retweeter. Graph databases are excellent at searching relationships and finding paths, so starting with John and Alice:

In this simple case there is a single path from John back to Peter with no intermediate nodes, it’s quite likely John directly retweeted Peter. The only path from Alice back to Peter is the one through John (and we know John retweeted) so it is quite likely that Alice saw the retweet from John and retweeted that.

Now humans, being complicated things, make complicated social networks. Let’s take a closer look at Bob:

There are multiple paths from Bob back to Peter and we could take the following strategies: look at only the shortest paths, look at all paths, or set a limit on path lengths (if we set a limit of 2, also the shortest, we will only identify the paths through John and Mary but not Jim). I would suggest, if possible, looking at all paths but this will have performance consequences for very large graphs.

The next question is do we want to look at all the tweets from Mary and Jim to see if they also retweeted the original (this can be an expensive operation – in API time or if buying tweets)? If not then we don’t know the actual path but can estimate the likelihood of each path having been taken perhaps with the shortest being more likely (I’m not exactly sure how the maths has to work here…help me out), or maybe there is some historical data we have about existing paths.

If examining each person’s tweets then consider the following possibilities, from the above example:

- Only John retweeted: the path is most likely via John

- John and Mary retweeted: it’s equally likely to have come via John or Mary, unless there is some historic data suggesting stronger existing ties to one of them

- John, Mary and Jim retweeted: well I’m not sure, are the shorter paths more likely, I guess they are as they would have placed the retweet on the users timeline first but the path through Jim cannot be completely dismissed, I’m wondering if infer.net could help here?

Finally consider this:

Well who knows? Maybe there are some protected Twitter accounts or maybe Steve picked this up because of a hashtag, which is a whole other topic.

Has anyone out there already answered these questions, please get in touch?

When a social network knows it is being watched does it change?

There is tremendous value in analysing social networks, both internally to an organisation, and looking at the social networks of the organisation’s customers, suppliers, industry influencers etc. but what happens when that community becomes aware that their publications and interactions are being analysed?

I asked this question at Social Data Week ’13 in London and the panel’s answer was that it did: you can already observe people taking advantage of this in expecting some sort of reward for following, or otherwise being associated with, organisations. This sounds fairly innocuous but I am more concerned about observing networks inside the organisation.

Think about the following scenario: an organisation analyses IMs to gauge sentiment and presents this information by department; one department noticeably has a lot of negative sentiment compared to the others; the department’s manager is advised of this and asked to devise and implement a plan to improve the situation. What could they decide to do, the three options are:

1 The right thing: find the root causes and address them

2 The lazy thing: don’t do anything, hope it improves

3 The wrong thing: tell members of the department the communications are being monitored and not to use negative language

I have actually observed the wrong thing being done when it comes to staff surveys (which amongst other things are trying to gauge staff sentiment about the organisation): the manager of the department let it be known they did not want to see negative ratings of management in the survey, presumably because the results of the survey had some bearing on their bonus. I fear the same thing would happen if social network analysis and/or sentiment analysis were being used.

Another option an organisation has is to use surveys to build a picture of the social network (I’ve recently exchanged some views with TECI who take this approach). In this case its clear that the organisation is collecting the data but I wonder how accurate this is; I think people may either not answer entirely honestly or simply forget about certain connections in their network as they don’t seem important (but could be very important in the overall network). I’d love to know if anyone has any studies that compare networks derived from surveys with those derived from communications data. My guess is doing both and combining the results would give the most accuracy.

So if an organisation does want to use communications data for SNA what should it do? Having thought about this I think the answer if to firstly baseline the communications data and then announce that the organisation has such an intent (assuring staff that it will be analysed anonymously) and finally observe the communications data to see if there is a change from the baseline. The next step depends on the result: if there is very little change then it’s probably OK to carry on but if there is a noticeable change then this is telling the organisation something and it needs to understand why there was a change before proceeding.

Does anyone know of any studies, or have any experience of, social networks changing if they become aware they are observed?

Effectiveness of Large Meetings Revisited

Some time ago I wrote about examining the numbers of Emails sent during meetings and concluded by saying “looking at instant message traffic during meetings would be more revealing” . I now have some IM traffic to compare.

Before continuing I would like to emphasise that all this data is anonymous and I do not believe it should be examined in any other way because humans are more complex than any analysis of this nature can reveal, it is only useful to find biases for a particular behaviour in a particular situation or identify trends.

Because I do not have IM data for the same period that my original post relates to I have first re-created the email analysis but restricted it to meetings with all-internal attendees because the IM system is only available employees of the organisation:

This is similar to the previous analysis with the exception of less emails being seen from smaller meetings. This could be due to some major changes in the organisation but I’m not investigating that now.

And now the IMs:

Well not the result I expected but this is what the data tells us: no obvious pattern to IM use compared to meeting size. I’m curious if there might be something buried in here, for example is it always the same people using IM in meetings, are they all in the meeting or are they in communication with people not in the meeting; does this ratio change with meeting size?

Email vs. Instant Messaging for Social Network Analysis, Round 4

In the organisation I’ve been studying I’ve previously described that Instant Messaging (IM) makes a small contribution to the overall understanding of the organisational/social network but this did not tell us if there was anything to learn about when people communicate. To examine this I’ve summarised IMs and emails sent by day, day of week and hour:

The following chart shows daily activity over a two month period. Note that the dip in emails around 20/07/2013 is due to a number of missing Exchange Server log files.

It can be observed that email is more popular and that the pattern of email and IMs is fairly regular when viewed at this scale. The only slightly unexpected observation is that IMs are more popular mid-week whereas email is more mixed.

The next question is does time-of-day make a difference?

You can see some interesting differences emerging, but to make in clearer I have produced a chart showing the percentages of each communication mechanism:

Here you can see some clear trends: IMs are more likely to be made when people are first starting work (in the morning 07:00-09:00 and after lunch 13:00-14:00) whereas email dominates the end of the working day (16:00 onwards). Without further study it can only be speculation as to why this is but my theory is that IMs are used more informally and people who are socially close are exchanging greetings whereas Email is more formal and is used to evidence a day’s work complete.

And what about Wednesdays how does that look when we turn the actual numbers into percentages?

Well, yes, definitely Wednesday is the most popular day for IMs. I can offer absolutely no theory as-to why this is and I’d welcome any suggestions.

theguardian.com: Research finds ‘US effect’ exaggerates results in human behaviour studies

Interesting article on theguardian.com: there may be a bias for ‘exciting’ results from U.S. researchers in the human behaviour field. Social Network Analysis draws from Graph Theory to state facts about the nodes/vertices and edges in a graph derived from human interaction but interpreting what this means is more subjective and, because there is an overlap in these fields of study, similar biases are likely to creep in. Do I think this is a problem? Not really, I think most people will try to find a number of studies to take a balance of their findings and, in my opinion, it’s best to try to apply the results to your own data before conclusively accepting them.

Follow

Follow